What Is Subspecialty Radiology?

By Dr. Zain Qazi

Table of Contents

- The Training Pipeline: 13 to 14 Years Before an Independent Read

- The Eight Recognized Subspecialties

- The 30% Error Rate That Technology Has Not Fixed

- The Spine MRI Study: One Patient, 10 Centers, Zero Consensus

- What the Data Actually Shows About Subspecialty Matching

- The Radiology Workforce Shortage

- How Subspecialty Matching Works in Teleradiology

Key Takeaways

- • Subspecialty radiologists train for 13 to 14 years before reading their first independent case. 96% of diagnostic radiology residents now pursue fellowship.1

- • The retrospective diagnostic error rate in radiology has hovered near 30% since 1949, despite transformational advances in imaging technology.3

- • Subspecialty-matched reads show discrepancy rates of 2.0% to 2.7%. Non-subspecialty reads: 12.4% to 40% depending on body region.8,9

- • In the Herzog spine MRI study, one patient visited 10 imaging centers. The result: 49 distinct findings, zero found by all 10, and an average miss rate of 43.6%.5

- • The AAMC projects a 17,000 to 42,000 physician shortfall by 2033, with radiology among the hardest-hit specialties. Demand is outpacing workforce growth.12

Radiology looks nothing like it did thirty years ago. 3T MRI scanners produce sub-millimeter resolution. CT protocols reconstruct three-dimensional volumes from a single breath-hold. AI algorithms flag suspected pathology before the radiologist opens the study. Yet one number has barely moved: the diagnostic error rate.

That stubborn number is the reason subspecialty radiology exists. The imaging technology is not the bottleneck. The human reading it is. And the single most effective intervention we have for reducing interpretive error is matching the right study to the right reader.

This article walks through the training pipeline, the eight subspecialty domains, the data on error and accuracy, the looming workforce shortage, and what it all means for imaging centers, clinicians, and attorneys who depend on radiology reports every day.

The Training Pipeline: 13 to 14 Years Before an Independent Read

Becoming a subspecialty radiologist is one of the longest training paths in medicine. The sequence: four years of medical school, one year of clinical internship, four years of diagnostic radiology residency, and one to two years of subspecialty fellowship. That is 13 to 14 years of post-secondary education before a fellowship-trained radiologist reads a case independently.

The fellowship year (or years) is where generalists become specialists. A neuroradiology fellow spends 12 months reading nothing but brain, spine, and head-and-neck imaging. An MSK fellow reads thousands of joint, bone, and soft tissue studies. That concentration builds a pattern library that generalist training simply does not produce.

How common is fellowship? According to data published in Radiology (RSNA, 2022), 96% of diagnostic radiology residents now pursue at least one fellowship.1 The field has voted with its feet. Generalism in radiology is the exception, not the rule.

Rosenkrantz et al. (2018) found that only about 6% of practicing radiologists describe themselves as pure generalists with no subspecialty focus.2 The remaining 94% either completed formal fellowship or developed a functional subspecialty through years of focused practice. The profession has recognized, implicitly and explicitly, that the breadth of modern imaging exceeds what any single physician can master.

The Eight Recognized Subspecialties

Radiology's subspecialties map roughly to anatomical regions, though some are defined by technique or patient population. Each carries its own board certification pathway and clinical focus.

| Subspecialty | What It Covers | Clinical Focus |

|---|---|---|

| Neuroradiology | Brain, spine, head and neck | Stroke, tumors, degenerative disease, trauma, demyelination |

| Musculoskeletal (MSK) | Bones, joints, ligaments, tendons, muscles | Sports injuries, arthritis, fractures, soft tissue masses, post-surgical evaluation |

| Body/Abdominal | Abdomen, pelvis, GI tract, GU system | Liver lesions, renal masses, bowel pathology, oncologic staging |

| Cardiothoracic | Heart, lungs, great vessels, mediastinum | Pulmonary embolism, lung nodules, cardiac function, aortic disease |

| Breast Imaging | Mammography, breast MRI, ultrasound | Screening, diagnostic workup, biopsy guidance, risk assessment |

| Interventional Radiology | Image-guided procedures | Now recognized as its own primary specialty with a dedicated residency pathway |

| Nuclear Medicine | PET, SPECT, molecular imaging | Oncologic staging, cardiac perfusion, thyroid disease, theranostics |

| Pediatric Radiology | All imaging modalities in children | Congenital anomalies, growth-plate injuries, pediatric-specific pathology, dose optimization |

The depth within each domain is significant. A neuroradiologist does not just "know brain imaging." They differentiate between dozens of white matter disease patterns, grade gliomas by imaging phenotype, identify subtle cord compression that a generalist might call "degenerative changes," and recognize vascular malformations that mimic tumors. That pattern library is the product of thousands of focused reads.

Interventional radiology warrants a special note. The American Board of Medical Specialties recognized IR as a primary medical specialty in 2012. It now has its own integrated residency pathway, separate from diagnostic radiology. IR physicians perform minimally invasive procedures (biopsies, embolizations, drain placements) under imaging guidance. At Expert Radiology, our diagnostic reads cover the interpretive subspecialties: neuro, MSK, body, and more.

The 30% Error Rate That Technology Has Not Fixed

In 1949, a radiologist named L. Henry Garland published a study that quietly became one of the most cited findings in the field. He asked radiologists to re-read their own chest X-rays after a delay. Roughly 30% of the time, they changed their interpretation. That figure was shocking at the time. What is more shocking is that it has not changed.

Adrian Brady's comprehensive review in the British Journal of Radiology (2017) surveyed decades of error-rate literature and reached a blunt conclusion: retrospective disagreement rates in radiology remain in the 20% to 30% range, "despite dramatic improvements in imaging technology."3 The scanners improved. The readers did not, at least not at a population level.

The real-time miss rate is lower, typically estimated at 3% to 5% in prospective studies. But even at 4%, consider the scale. The global medical imaging market produces an estimated one billion diagnostic studies per year. A 4% miss rate translates to 40 million errors annually.

A 2022 editorial in RSNA News framed the problem directly: "A 1949 radiologist reading chest X-rays on film wouldn't recognize a modern radiology department. The workstations, the modalities, the resolution, the speed. And yet the error rate is the same. That's a human factor, not a technology factor."4

This is not a criticism of radiologists as individuals. It is a structural reality. Human visual perception has inherent limits: inattentional blindness, satisfaction of search (finding one abnormality and stopping), and the cumulative fatigue of reading 50 to 100 studies in a shift. The question is not whether errors happen. The question is what systems reduce them. Subspecialty matching is the most evidence-backed answer.

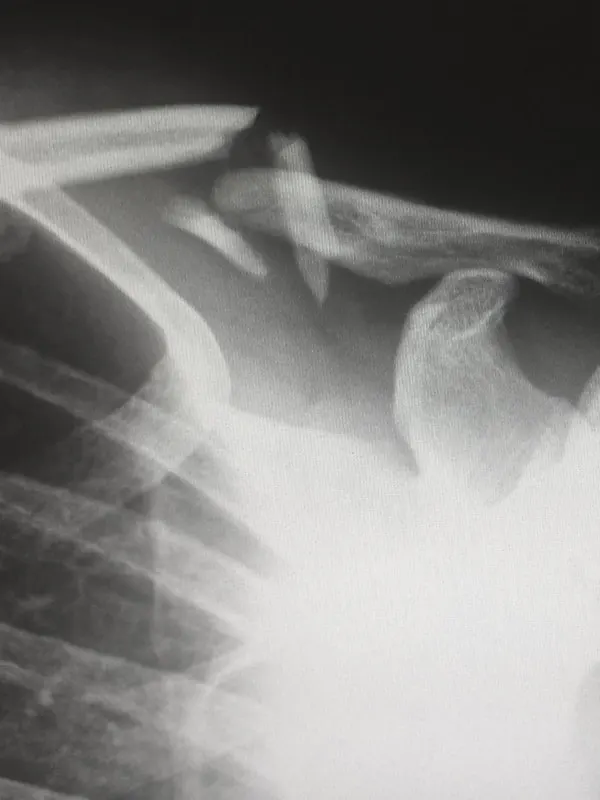

The Spine MRI Study: One Patient, 10 Centers, Zero Consensus

If there is a single study that captures the problem, it is Herzog et al. (2017), published in The Spine Journal.5

The design was simple. One patient with low back pain visited 10 different MRI centers over a three-week period. Each center scanned and interpreted the lumbar spine independently. The researchers then compared the reports.

The results were staggering:

- • 49 distinct findings were reported across all 10 centers

- • Zero findings appeared in all 10 reports

- • 32.7% of findings were reported by only a single center

- • The average miss rate was 43.6% per center

- • Inter-rater agreement (Cohen's kappa): 0.20 (poor)

Think about what that means in practice. A patient with genuine spinal pathology can walk into two different imaging centers and receive two fundamentally different reports. The treatment plan, the legal case, the insurance authorization: all of it downstream of a report that might share only half its findings with the report down the street.

The study did not break down results by subspecialty training of the interpreting radiologist. But the implication is clear. When spine MRI interpretation varies this dramatically, the reader's depth of training in spine imaging is not a nice-to-have. It is the variable that matters most.

For attorneys handling injury cases, the Herzog study is required reading. It is the single best piece of evidence that not all radiology reports are created equal, and that the choice of who reads the images can change the entire trajectory of a case.

What the Data Actually Shows About Subspecialty Matching

Here is where intellectual honesty matters. The data on subspecialty matching is strong, but it is nuanced. It does not show that generalists are incompetent. It shows that complexity is the variable that determines when subspecialty training makes a measurable difference.

The largest study to date, published by Rosenkrantz et al. in the American Journal of Roentgenology (2022), analyzed 5.9 million examinations.6 For routine, acute-care community reads, they found no statistically significant difference in diagnostic accuracy between generalist and subspecialist radiologists. A straightforward chest X-ray for pneumonia, a CT head for acute trauma: a well-trained generalist handles these as well as a subspecialist.

But for advanced and complex examinations, the picture changes sharply. And that is where most high-stakes reads live: complex spine MRI, oncologic staging, pediatric anomalies, body MRI for equivocal lesions.

The body-region-specific data tells the real story:

| Study Domain | Subspecialty Discrepancy | Non-Subspecialty Discrepancy | Source |

|---|---|---|---|

| MSK (oncology) | 9.2% | 27.9% | Skeletal Radiology, 2019 |

| Neuroradiology (QC) | 2.0% | 12.4% | Zan et al., AJNR, 2012 |

| Pediatric imaging | Subspecialist correct 90.2% | 41.8% overall disagreement | Lam et al., AJR, 2012 |

| Body MRI | 68.9% discrepancy when read outside subspecialty | Kostrubiak et al., AJR, 2020 | |

| MSK (general) | 2.7% | Variable | Chalian et al., AJR, 2016 |

The Zan et al. neuroradiology study deserves attention.8 In a large academic quality-control program, neuroradiologists reading within their subspecialty produced discrepancy rates of just 2.0%. When the same types of studies were read by radiologists outside the subspecialty, discrepancies rose to 12.4%. That is a six-fold difference in error rate based solely on training match.

The pediatric data from Lam et al. is equally striking.9 In a second-opinion program at a children's hospital, overall disagreement between the original read and the subspecialty re-read was 41.8%. When there was disagreement, the pediatric subspecialist was correct 90.2% of the time. Children are not small adults. Their anatomy, pathology, and normal variants differ enough that generalist training consistently falls short.

The Kostrubiak body MRI study found a 68.9% discrepancy rate when body MRI studies were read outside the subspecialty.10 Body MRI is technically demanding. Sequences are complex, artifact-prone, and require specific knowledge of hepatic phases, diffusion-weighted imaging patterns, and organ-specific pathology. Reading body MRI without body imaging fellowship training is, statistically, closer to guessing than diagnosing.

The Radiology Workforce Shortage

The need for subspecialty expertise is growing. The supply of radiologists is not keeping up.

The Association of American Medical Colleges (AAMC) projects a shortfall of 17,000 to 42,000 physicians across specialties by 2033, with diagnostic radiology among the most affected fields.12 The pipeline is constrained at every stage: limited residency slots, long training timelines, and retirement of the baby-boomer physician cohort.

The Harvey L. Neiman Health Policy Institute painted a more specific picture in its 2025 workforce analysis. The radiology workforce is projected to grow by 25.7% through 2055. That sounds adequate until you see the demand side: imaging utilization is projected to grow by 26.9% over the same period.12 The gap is not dramatic in percentage terms, but it compounds. By the mid-2030s, the shortfall becomes operationally significant. Rural and community hospitals feel it first.

COVID-era attrition accelerated the problem. Physician burnout in radiology runs between 51% and 54% depending on the survey and year.13 Post-pandemic, early retirement rates among radiologists ran 50% higher than historical baselines. The physicians leaving are disproportionately senior, experienced subspecialists. Their replacements are years away from completing fellowship.

For imaging centers, the staffing math is brutal. You need subspecialty coverage across neuro, MSK, body, and chest. Each subspecialist costs $400,000 to $600,000 annually in compensation. Recruiting takes 12 to 18 months. And when a subspecialist leaves, you lose both the expertise and the referring physician relationships built around that expertise. This is exactly the gap that subspecialty-focused teleradiology partners are designed to fill.

How Subspecialty Matching Works in Teleradiology

Teleradiology is not a new concept. Remote interpretation has existed since the early days of PACS. What has changed is the sophistication of case routing. Modern subspecialty teleradiology does not simply send studies to "a radiologist." It matches each study to the most qualified reader in the network, based on body region, modality, clinical indication, and urgency.

The workflow follows a predictable pattern:

- 1 Study arrives. Images are transmitted via PACS integration. Clinical history, ordering physician notes, and study indication are captured automatically.

- 2 Intelligent routing. The system identifies the body region and modality (e.g., lumbar spine MRI) and routes the study to a fellowship-trained radiologist in the matching subspecialty (e.g., neuroradiology or MSK, depending on the indication).

- 3 Interpretation. The subspecialist reviews the images on a diagnostic-grade workstation, correlates with clinical history, and dictates a structured report.

- 4 Report delivery. The finalized report is transmitted back to the ordering facility. Turnaround depends on case complexity, priority, volume, and workflow. Data from PMC (2020) shows that subspecialized teleradiology routing reduces average turnaround time by up to 70%.14

- 5 Quality assurance. Discrepancy tracking, peer review, and outcome correlation close the feedback loop. The best teleradiology groups track their error rates the same way academic departments do.

The advantage of this model is access. A 50-bed community hospital in rural Alabama does not need to employ a full-time neuroradiologist. Through a subspecialty teleradiology partner, brain and spine MRIs can route toward neuroradiology expertise, the same kind of reader available at an academic medical center. The imaging center keeps its referral relationships. The patient gets a better report. The attorney gets defensible evidence.

At Expert Radiology, this is the foundation of our service. Studies are routed by modality, anatomy, credentials, and available subspecialty expertise. Our team includes neuroradiologists, MSK specialists, spine imaging experts, and more, covering reads across 350+ facilities in all 50 states. The report that comes back is a focused interpretation, delivered with the kind of detail and clarity that clinicians, imaging centers, and attorneys can build on.

Written by

Zain Qazi, M.D.

Musculoskeletal Imaging and Intervention Specialist

Medically reviewed by

Avery J. Knapp Jr., M.D.

Board Certified Radiologist, Neuroradiology

Sources

- RSNA Radiology, 2022. "Trends in Diagnostic Radiology Residency Fellowship Placement." Fellowship pursuit rate: 96% of graduating residents.

- Rosenkrantz AB, et al. Radiology, 2018. "The U.S. Radiologist Workforce: An Analysis of Temporal and Geographic Variation by Using Large National Datasets." Generalist practice rate: approximately 6%.

- Brady AP. "Error and Discrepancy in Radiology: Inevitable or Avoidable?" British Journal of Radiology, 2017;90(1080):20160948. Retrospective error rates: 20% to 30%.

- RSNA News, 2022. Editorial commentary on diagnostic error as a human-factors problem rather than a technology problem.

- Herzog R, et al. "Variability in diagnostic error rates of 10 MRI centers performing lumbar spine MRI examinations on the same patient within a 3-week period." The Spine Journal, 2017;17(4):554-561.

- Rosenkrantz AB, et al. "Best of AJR: Association Between Radiologist Subspecialty and Interpretation Accuracy." American Journal of Roentgenology, 2022. 5.9 million examinations analyzed.

- Chalian M, et al. "Discordance Rates Between Preliminary and Final Radiology Reports in Musculoskeletal Imaging." American Journal of Roentgenology, 2016;207(5):1079-1084.

- Zan E, et al. "Discrepancy Rates Between Preliminary and Final Reports in Neuroradiology." American Journal of Neuroradiology, 2012;33(4):E11-E14. Within-subspecialty discrepancy: 2.0%.

- Lam DL, et al. "Pediatric Radiology Second-Opinion Consultations: Rate of Clinically Significant Discrepancies." American Journal of Roentgenology, 2012;199(4):916-920. Overall disagreement: 41.8%; subspecialist correct: 90.2%.

- Kostrubiak DE, et al. "Discrepancy Rates in Body MRI Across Subspecialty and Non-Subspecialty Reads." American Journal of Roentgenology, 2020;215(2):348-353. Discrepancy rate: 68.9%.

- Skeletal Radiology, 2019. Subspecialty vs. non-subspecialty discordance in MSK oncology imaging: 9.2% vs. 27.9%.

- Harvey L. Neiman Health Policy Institute, 2025. "Projected Radiology Workforce Supply and Demand Through 2055." Workforce growth: 25.7%; demand growth: 26.9%. AAMC physician shortfall projections: 17,000 to 42,000 by 2033.

- ACR Bulletin, 2026. Radiology workforce update. Burnout rates: 51% to 54%. COVID-era attrition: 50% above historical baseline.

- PMC, 2020. Analysis of subspecialized teleradiology reporting turnaround times. Subspecialty routing associated with up to 70% reduction in average TAT.