The Role of Second Opinions in Diagnostics

By Dr. Chad Barker

Table of Contents

Key Takeaways

- Mayo Clinic research found 88% of second opinions resulted in a new or refined diagnosis. Only 12% of initial diagnoses were fully confirmed.

- Radiology discordance rates vary by subspecialty: 7.7% in neuroradiology, 26.2% in musculoskeletal, and up to 68.9% in body MRI.

- When second reads disagree with the original, 92% of patients have their treatment plans changed. This is not an academic exercise.

- Subspecialty radiologists are correct in 82-90% of discrepant cases, compared to error rates of 12-40% for generalists.

- Cleveland Clinic data shows second opinions save an average of $8,705 per case, with 67% resulting in a changed diagnosis or treatment plan.

1. The Mayo Clinic Number Everyone Cites

In 2017, researchers at the Mayo Clinic published a study that forced the medical community to reckon with a deeply uncomfortable question: how often is the first diagnosis wrong? The answer, published in the Journal of Evaluation in Clinical Practice, was startling.

Van Such et al. analyzed 286 patients referred to Mayo Clinic's General Internal Medicine division between January 2009 and December 2010. Every patient already carried a diagnosis from their referring physician. After a thorough re-evaluation by Mayo specialists, the results broke down cleanly: 21% received a completely different diagnosis, 66% had their original diagnosis refined or redefined in a clinically meaningful way, and only 12% had the initial diagnosis fully confirmed.

Combined, 88% of patients who sought a second opinion left with a new or meaningfully altered diagnosis.

Now, context matters here. These were general medicine cases, not radiology specifically. The patients were self-selected and complex enough to warrant referral to a top academic center. Nobody is claiming that 88% of all diagnoses in community practice are wrong. But this was peer-reviewed research from one of the most respected institutions in the world, and the numbers are too large to dismiss. The study made a straightforward point: a single evaluation, even by a competent physician, has inherent limitations. Diagnostic certainty improves with additional expert review.

For radiology, the implications are direct. If this level of diagnostic revision happens in general medicine, what happens when you apply the same rigor to imaging interpretation?

2. Radiology-Specific Discordance Rates

The short answer: it depends on the subspecialty. Published discordance rates between initial reads and subspecialty second opinions range widely, and the variation itself tells an important story about where diagnostic uncertainty lives.

Neuroradiology shows the lowest discordance at 7.7%. Zan et al. published this figure in Radiology in 2010, reviewing second opinions in neuro-specific cases. This relatively low rate reflects the maturity of neuroradiology as a subspecialty and the well-established imaging protocols for brain and spine pathology.

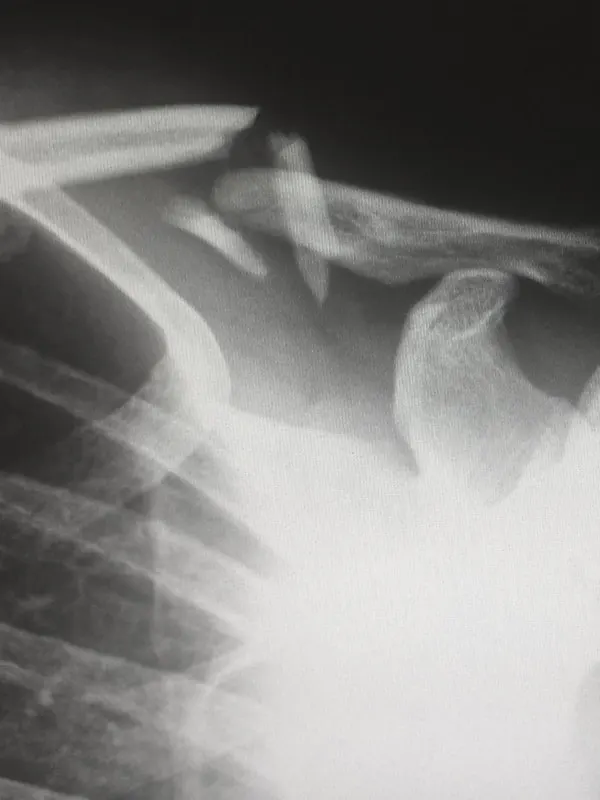

Musculoskeletal radiology sits higher at 26.2%, per Chalian et al. in the American Journal of Roentgenology (2016). That means roughly one in four MSK second opinions produced a different interpretation. The most commonly discrepant findings involved ligament and tendon pathology, precisely the structures that matter most in personal injury and workers' compensation cases.

Body MRI hits the highest published discordance: 68.9%. Kostrubiak et al. reported this in the AJR in 2020. Body imaging is inherently more variable because of the number of organ systems in play, the subtlety of pathology on MRI sequences, and the fact that many initial reads are performed by generalists without dedicated body imaging fellowship training.

Other subspecialties fall between these extremes. Breast imaging shows 16% discordance (Lam et al., JACR, 2019). Pediatric radiology comes in at 21.7% for major discrepancies, with a total discordance rate of 41.8% (Lam et al., AJR, 2012). Trauma transfer cases carry a 12% discordance rate, per a 2022 JACR study evaluating outside imaging re-read at a level-one trauma center.

These numbers deserve attention. If you are a provider ordering MRI or CT and relying on a single generalist read, the data says there is a meaningful probability the interpretation is incomplete or incorrect. The question is whether you find out before or after a clinical decision is made.

3. When the Second Read Changes Everything

Discordance rates are academic until you connect them to clinical consequences. Several studies have done exactly that, and the findings make the case for second opinions far more urgent than the percentages alone suggest.

A 2022 study from UW Medicine, published in the Journal of the American College of Radiology, tracked what happened to trauma patients whose outside imaging was re-read by the receiving institution's radiologists. Among cases with discrepant reads, 92% of patients had their treatment plans changed. 81% had extended emergency department stays as a direct result of the revised interpretation. These were not subtle "nice to know" distinctions. They were clinically actionable findings that altered the course of care.

Oncology provides equally striking examples. A 2025 study in European Radiology by Golia Pernicka et al. examined rectal cancer staging MRIs sent for subspecialty second opinions. The discordance rate was 53.8%. Among those discrepant cases, surgical planning changed in 38-46% of patients. Think about that: nearly half the patients with disagreements between the initial and second read had their surgical approach modified.

Head and neck cancer tells a similar story. A 2013 study in the Journal of Otolaryngology - Head & Neck Surgery found that subspecialty second opinions changed cancer staging in 56% of cases and altered management decisions in 38%. When staging changes, everything downstream changes: surgical approach, radiation fields, chemotherapy protocols, and prognosis discussions with patients and families.

The pattern is consistent across studies and subspecialties. When a second read disagrees with the first, it is not a coin flip. The second read carries clinical consequences that affect real patients, real treatment timelines, and real outcomes.

4. The Financial Case for Second Opinions

Diagnostic accuracy is a clinical imperative. It is also a financial one. Wrong diagnoses cost money at every level: unnecessary procedures, inappropriate treatments, extended recovery timelines, and, in litigation, case values that collapse under scrutiny.

The most comprehensive financial data comes from the Cleveland Clinic's partnership with Vital Statistics Online. Their 2024 analysis of virtual second opinion (VSO) outcomes found that the average second opinion saved $8,705 per case. For high-cost cases, the average savings jumped to $100,911. Among patients who had surgery recommended at their initial evaluation, 85% avoided surgery after the second opinion revised the diagnosis or treatment plan. Overall, 67% of second opinions resulted in a changed diagnosis or modified plan.

For attorneys handling injury cases, the financial calculus works differently but is equally compelling. A missed finding on the initial read means an undervalued case. A vague or hedged interpretation gives opposing counsel ammunition. A subspecialty second opinion that identifies previously unreported pathology does not just correct a medical record; it can add five or six figures to case value.

Consider a concrete example from our own practice: a $500 second opinion that identified disc pathology overlooked on the initial read produced an additional $15,000 in recoverable case value. That is a 30x return. The economics of second opinions are not speculative. They are documented.

5. The Hedge Word Tax

There is a problem that sits upstream of diagnostic discordance, one that second opinions expose with uncomfortable regularity. It is the language of the report itself. Lee and Whitehead published a landmark study in Current Problems in Diagnostic Radiology in 2017 that quantified something practicing clinicians already suspected: radiologists and referring physicians interpret the same words very differently.

The researchers tested 36 commonly used radiology terms across both populations. Eleven of the 36 terms were interpreted with statistically significant disagreement. The most revealing example was "consistent with." Radiologists who used that phrase intended to communicate 75-100% diagnostic certainty. Primary care physicians who read it understood it as less than 50% certainty. The same two words. Opposite interpretations.

The downstream effects on clinical behavior were measurable. When a report described a "benign cyst," only 2% of referring physicians ordered follow-up imaging. Change the language to simply "cyst" and 22% ordered follow-up. "Most likely a cyst" pushed the follow-up rate to 46%. And "most likely a cyst, tumor not excluded" triggered follow-up imaging 75% of the time.

Each layer of hedging added cost, anxiety, and delay. None of it changed the underlying pathology. The finding was the same in every scenario. What changed was the radiologist's willingness to commit to a clear interpretation.

For attorneys, hedge words are an even more direct liability. "Cannot exclude" and "clinical correlation recommended" are phrases that give opposing counsel exactly what they need to cast doubt on injury causation. A second opinion from a subspecialist who writes in direct, unambiguous language strips away that ambiguity and replaces it with diagnostic certainty. Your MRI report can be 100% clinically accurate and still be completely useless if the language leaves room for six different interpretations.

6. When to Get a Second Opinion

Not every imaging study requires a second read. But certain clinical situations carry high enough diagnostic stakes that a second opinion should be standard practice, not an afterthought. Based on the published evidence, here are eight scenarios where a second opinion adds clear, measurable value.

- 1. Inconclusive or equivocal results. If the initial report uses phrases like "cannot exclude," "correlate clinically," or "recommend follow-up," a subspecialty second read often resolves the ambiguity outright.

- 2. Persistent symptoms with a "normal" report. Patients who continue to report pain or functional limitation despite imaging read as normal deserve a closer look. Subtle ligament tears, early degenerative changes, and small herniations are frequently missed on initial reads.

- 3. Before major surgery. The Cleveland Clinic data speaks for itself: 85% of patients who were initially recommended for surgery avoided it after a second opinion. Confirming the surgical indication with a second read protects both the patient and the surgeon.

- 4. Complex or rare pathology. Unusual tumors, congenital variants, and atypical presentations challenge even experienced generalists. These cases demand fellowship-trained eyes with daily exposure to the relevant anatomy.

- 5. Oncologic staging. With 53.8% discordance in rectal cancer and 56% staging changes in head and neck cancer, the evidence is unambiguous. Cancer staging imaging should be read by a subspecialist, period.

- 6. Pediatric imaging. An estimated 85% of pediatric imaging studies are interpreted by radiologists without pediatric fellowship training. With a 21.7% major discrepancy rate documented in the literature, children are an especially vulnerable population for diagnostic error.

- 7. Outside studies at a new institution. Every time a patient transfers between hospitals or clinics with imaging from a different facility, the receiving institution is making clinical decisions based on a stranger's interpretation. Re-reading those images is basic risk management.

- 8. Personal injury and litigation cases. In legal proceedings, the radiology report is evidence. An underdetailed or hedged report weakens the plaintiff's position. A subspecialty second opinion with clear language and precise characterization strengthens it.

The common thread across all eight: diagnostic certainty matters, and a single read from a single radiologist is sometimes not enough to achieve it.

7. Subspecialty Second Opinions vs. General

Not all second opinions are created equal. The published literature makes a sharp distinction between a second read from another generalist and a second read from a fellowship-trained subspecialist. The data overwhelmingly favors the subspecialist.

Chalian et al. (2016) found that in MSK cases with discordant reads, the subspecialist was correct 82% of the time. Zan et al. (2010) reported similar numbers in neuroradiology, with the subspecialist confirmed correct in 84% of disagreements. The pediatric data is even more decisive: Lam et al. (2012) found the subspecialist was correct in 90.2% of discrepant cases.

On the flip side, Brady's 2017 review in the British Journal of Radiology compiled error rates across the field. General radiology interpretation errors ranged from 12.4% to 40%, depending on the study and modality. Subspecialty error rates in the same review fell between 2.0% and 2.7%. That is not a marginal improvement. It is an order-of-magnitude difference in accuracy.

Additional data from MSK oncology imaging (published in Skeletal Radiology, 2019) showed a discrepancy rate of 9.2% for subspecialty reads versus 27.9% for generalist reads. When the stakes are highest, when the pathology is a tumor and the clinical question is whether to cut, the gap between generalist and subspecialist accuracy widens further.

The reason is not intelligence or effort. General radiologists are well-trained, hardworking physicians. The difference is volume and specificity. A fellowship-trained neuroradiologist reads thousands of brain MRIs per year. A general radiologist reading everything from chest X-rays to knee MRIs to abdominal CTs simply cannot develop the same depth of pattern recognition in any single area.

For patients, providers, and attorneys: if you are going to seek a second opinion, make sure it comes from a subspecialist. The data is clear that a generalist re-read of a generalist interpretation does not move the needle. A subspecialist re-read does.

Written by

Chad Barker, M.D.

Musculoskeletal Specialist

Medically reviewed by

Avery J. Knapp Jr., M.D.

Board Certified Radiologist, Neuroradiology

Sources

- Van Such M, Lohr R, Beckman T, Naessens JM. Extent of diagnostic agreement among medical referrals. Journal of Evaluation in Clinical Practice. 2017;23(4):870-874.

- Zan E, Yousem DM, Carone M, Lewin JS. Second-opinion consultations in neuroradiology. Radiology. 2010;255(1):135-141.

- Chalian M, Del Grande F, Thakkar RS, et al. Second-opinion subspecialty consultations in musculoskeletal radiology. American Journal of Roentgenology. 2016;206(6):1217-1221.

- Kostrubiak DE, Broncano J, Garg T, et al. Body MRI second-opinion consultations. American Journal of Roentgenology. 2020;214(4):904-910.

- Lam DL, Larson DB, Eisenberg JD, et al. Communicating potential follow-up recommendations to referring physicians: Breast imaging second opinions. Journal of the American College of Radiology. 2019;16(3):344-350.

- Lam WW, Peng WS, Luk HN, et al. Impact of subspecialty second-opinion consultations on pediatric radiology. American Journal of Roentgenology. 2012;199(6):1363-1369.

- UW Medicine. Discordant imaging interpretations and clinical impact in trauma transfer patients. Journal of the American College of Radiology. 2022.

- Cleveland Clinic / Vital Statistics Online. Virtual second opinion outcomes analysis. BusinessWire. 2024.

- Lee MH, Whitehead MT. Radiology reports: What YOU think you're saying and what THEY think you're saying. Current Problems in Diagnostic Radiology. 2017;46(3):186-195.

- Brady AP. Error and discrepancy in radiology: Inevitable or avoidable? British Journal of Radiology. 2017;90(1080):20160948.

- Golia Pernicka JS, Defined Peer, et al. Subspecialty second opinions for rectal cancer MRI staging. European Radiology. 2025.

- Loevner LA, et al. Impact of second-opinion interpretation on head and neck cancer staging and management. Journal of Otolaryngology - Head & Neck Surgery. 2013;42(1):30.

- Wibmer AG, et al. Subspecialty vs. generalist accuracy in musculoskeletal oncology imaging. Skeletal Radiology. 2019;48(7):1053-1060.